AI optimization has improved how customer engagement programs operate.

It can test more combinations, react faster than manual workflows, and continuously adapt message selection based on user behavior. That part is real.

But there is also a hard truth hiding underneath much of the excitement around AI decisioning:

Many optimization systems fail long before the model ever runs.

Not because the models are weak.

Not because the infrastructure is missing.

Not because teams lack enough automation.

They fail because nobody was precise enough about what success actually means.

That is the part the industry still tends to understate.

We talk constantly about smarter models, better orchestration, faster experimentation, and more autonomous systems. But if the system is optimizing against the wrong signals, all that sophistication just makes the mistake scale faster. A model does not become intelligent simply because it updates quickly. If it is learning from shallow or misaligned incentives, it becomes highly efficient at producing the wrong kind of outcome.

That is the real problem.

And it is why so much “optimization” in marketing still looks more impressive in a dashboard than it does in the business.

Most systems do not have an optimization problem. They have a measurement problem.

A lot of engagement systems still operate as if all positive activity belongs on the same scoreboard.

An app open, a click, a purchase, a referral, a feature interaction, a return session — all of it gets blended together into some broad picture of “performance.” The assumption is that if enough activity is happening, the system must be learning something useful.

Usually, it is not.

When every action is treated as if it carries roughly the same meaning, the system does what most systems do: it gravitates toward what is easiest to find, easiest to increase, and easiest to report. It starts optimizing for activity because activity is abundant. But abundant is not the same thing as important.

A click is not a purchase.

A feature view is not adoption.

An app open is not retention.

And “engagement” is often just a polite way of saying we are not being specific enough about the outcome we actually want.

This is where many AI systems quietly go off course. They are not underperforming because they lack intelligence. They are underperforming because the business handed them a vague, watered-down definition of success and called it strategy.

Not every message should be judged by the same logic

Every message has an intended outcome.

A referral message is supposed to generate referrals.

A product announcement is supposed to drive feature adoption.

A promotion is supposed to influence purchases, bookings, or upgrades.

A retention message is supposed to keep a user active who might otherwise drift away.

This seems obvious when stated plainly. But most systems still do not behave as though it is true.

Instead, they evaluate all of those interactions through a generalized success model, as if they are interchangeable expressions of the same goal. That is not simplification. That is distortion.

Once that happens, optimization loses the ability to distinguish between a signal that is merely visible and a signal that is actually meaningful. The model may reward what is easy to observe instead of what matters to the business. And because the system is technically improving some metric, the problem can hide for a long time.

This is why defining success is not a secondary implementation detail. It is the foundation of whether optimization means anything at all.

Better AI starts with sharper intent

This is the context in which Reward Functions matter.

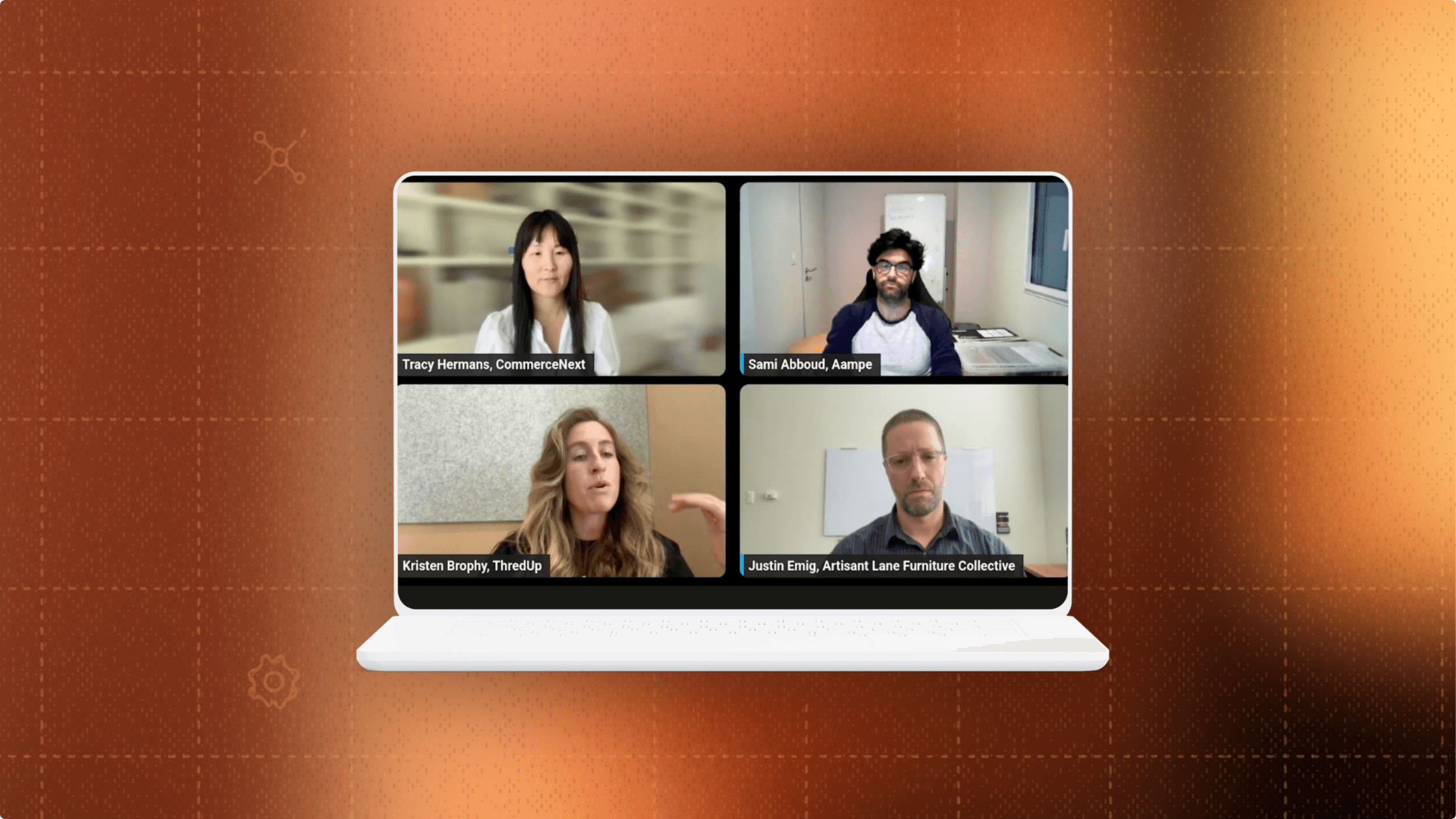

Reward Functions give teams a way to define success more deliberately by tying optimization to the events that actually reflect the purpose of a message. Instead of assuming the system will infer what matters from general activity, teams can specify the outcomes they care about and let Aampe learn from the behaviors that lead to those outcomes.

That shift is more important than it may sound.

Because the real promise of AI in marketing is not that it automates more decisions. It is that it can improve decisions over time. But improvement only happens when the system is pointed at the right target.

A faster system aimed at the wrong target is not progress. It is just drift with better branding.

Reward Functions make intent explicit. They force a level of clarity that many teams have historically been able to avoid. Instead of saying “optimize this campaign,” teams have to answer a harder question: optimize for what, exactly?

That is not a limitation. That is discipline.

And in AI systems, discipline is often what separates useful automation from expensive noise.

Good systems should learn from progress, not just outcomes

There is another mistake teams make when defining success: they assume the only thing worth learning from is the final conversion event.

In reality, meaningful outcomes usually emerge through a sequence.

A purchase may be preceded by browsing, product comparison, or cart activity.

A referral may be preceded by a visit to the referral page.

Product adoption may begin with exploration before it becomes repeated usage.

If a system waits only for the final outcome, learning becomes slower and more brittle. It misses the behavioral signals that show movement in the right direction.

This is one of the more powerful implications of Reward Functions. They do not just help the system recognize whether the final desired event happened. They also help it learn from the path toward that event. That means optimization can become more sensitive to progress, not just completion.

And that matters, because real customer behavior is rarely binary. Most of the value is hidden in the transition between indifference and intent.

Bad outcomes are not noise. They are part of the lesson.

There is another category habit worth challenging: the tendency to treat negative outcomes as background noise.

An unsubscribe is not just an unfortunate side effect.

A cancellation is not just collateral damage.

A message that generates activity and also pushes the user away has not succeeded in any meaningful sense.

Yet a surprising number of systems still behave as though short-term interaction can excuse long-term damage.

That is one of the clearest signs that an optimization framework is too shallow. It knows how to reward motion, but not how to judge quality.

Durable optimization has to learn what to avoid, not just what to chase.

This is why negative signals matter so much. They create the boundaries that prevent a system from overvaluing superficial response. A system that only knows how to pursue positive activity will eventually optimize itself into bad judgment. A system that can recognize friction, penalties, and undesirable outcomes has a much better chance of learning what real performance actually looks like.

The future of AI optimization is not more automation. It is better definitions.

As more teams adopt AI in customer engagement, the market will keep talking about speed, scale, and autonomy.

Those things matter. But they are not the deepest advantage.

The deepest advantage will come from better guidance.

The companies that win will not just have more automated systems. They will have more disciplined definitions of success. They will be better at telling those systems what matters, what progress looks like, what tradeoffs are acceptable, and what outcomes should count as failure.

That is the bigger shift behind Reward Functions.

Yes, it is a product capability.

But it also reflects a larger point about where the category needs to go.

The next wave of AI optimization will not be defined by who can generate more activity. It will be defined by who can distinguish activity from value.

And that starts earlier than most teams think.

It starts before the model.

Before the campaign.

Before the decision.

It starts with whether you were disciplined enough to define success clearly in the first place.

Check out Aampe

See how Aampe helps teams optimize customer engagement around the outcomes that matter. Book a demo.

More on Reward Functions

Learn how Reward Functions help AI systems distinguish real value from surface-level activity. Explore Reward Functions.